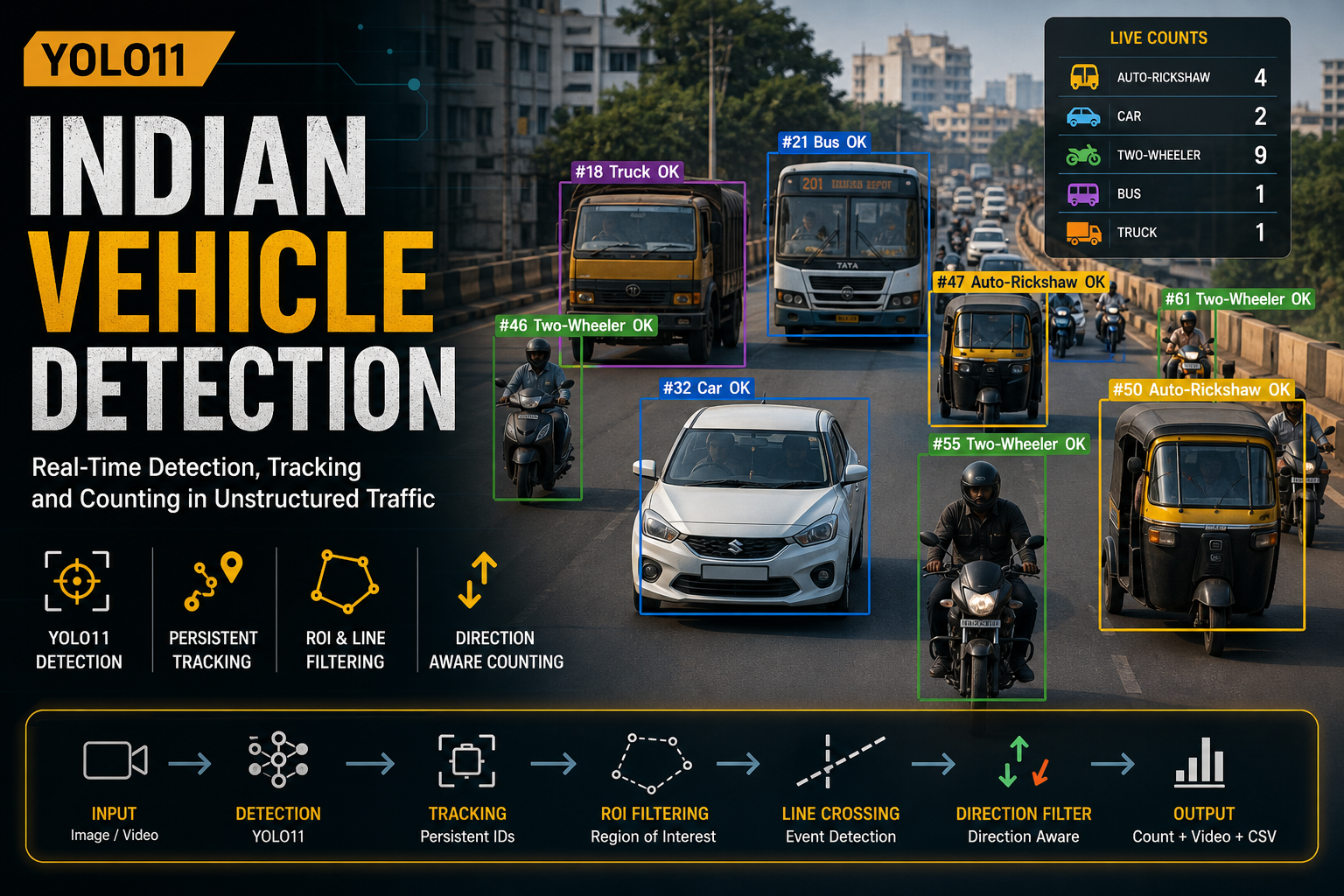

Indian Vehicle Detection using YOLO11

Posted on May 4, 2026

2 min read

By Devendrasingh Solanki

Indian Vehicle Detection using YOLO11 (Real-World System)

Traffic in India doesn’t follow rules — and that’s exactly what makes vehicle detection difficult.

In a single frame, you’ll see two-wheelers cutting across lanes, auto-rickshaws overlapping with cars, and frequent occlusions. Most standard object detection systems struggle in these conditions because they are built for structured environments.

This project focuses on building a reliable vehicle detection and counting system for real-world Indian traffic, not just a demo model.

What Actually Fails in Practice

A basic YOLO-based system works well on images, but fails in real scenarios:

- Same vehicle counted multiple times

- Vehicles missed during occlusion

- No understanding of direction

- Noise from irrelevant regions

So the problem is not detection — it’s consistency over time.

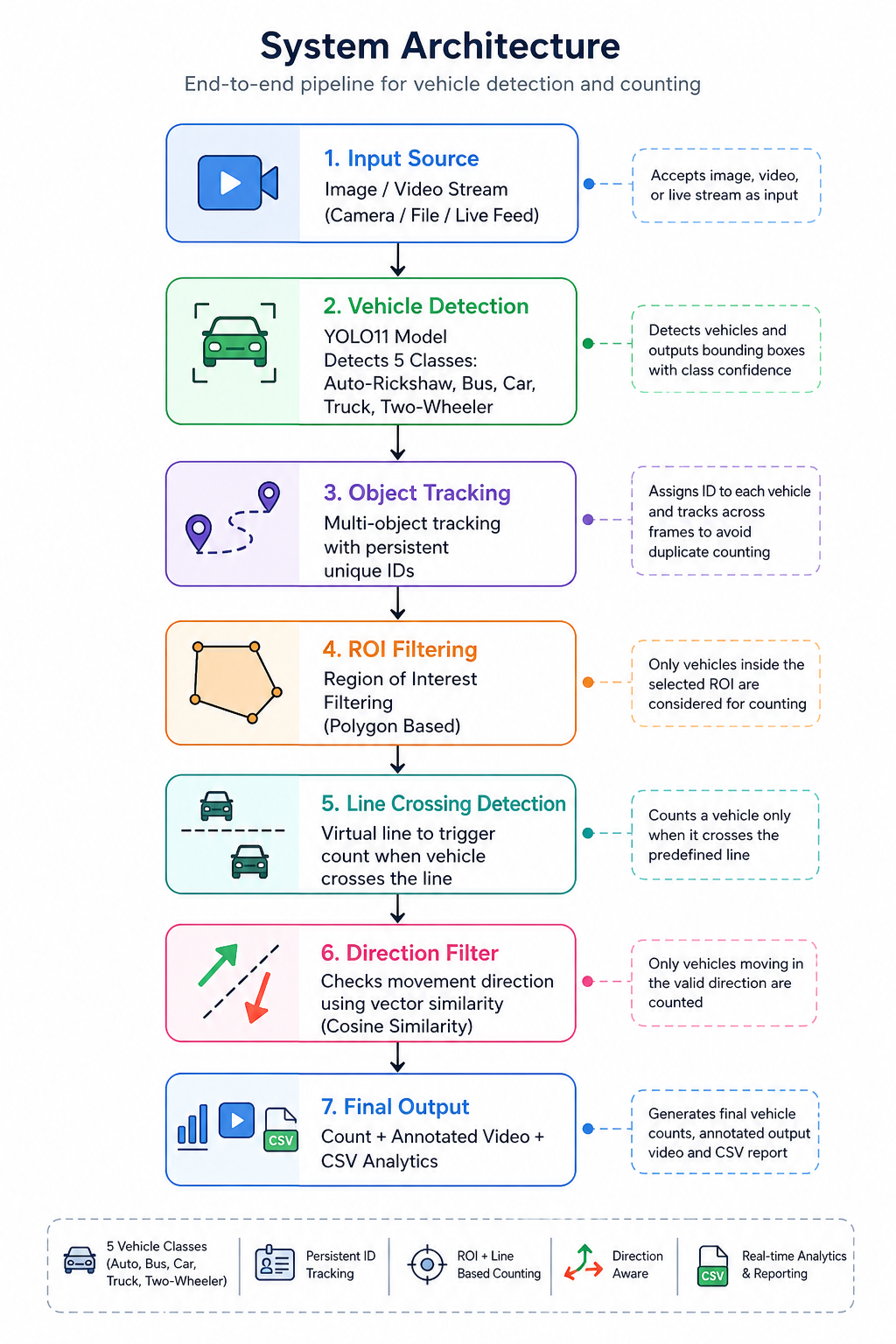

Core Idea

Instead of relying only on detection, the system is designed as:

Detection + Tracking + Motion Logic

Pipeline:

Input → Detection → Tracking → ROI → Line Crossing → Direction → Output

Each step fixes a real issue observed during testing.

System Architecture

Why This Architecture Works

Each stage exists because something broke without it:

- Detection (YOLO11) → Finds vehicles but is noisy

- Tracking → Prevents duplicate counting

- ROI Filtering → Removes unnecessary detections

- Line Crossing → Converts detections into countable events

- Direction Filtering → Ensures meaningful traffic flow analysis

This modular approach makes the system stable and production-ready.

Vehicle Detection

The model used is YOLO11x, trained on ~48,000 Indian traffic images.

Classes:

- Auto-Rickshaw

- Car

- Bus

- Truck

- Two-Wheeler

Some classes like Bicycle and Tractor were removed due to poor annotations, which improved overall accuracy.

Tracking (What Makes It Reliable)

Each vehicle is assigned a persistent ID:

track_history[track_id].append(center_point)

This ensures:

- No duplicate counting

- Stable tracking across frames

- Enables direction analysis

ROI Filtering

Only vehicles inside a defined region are processed:

if point_in_polygon(center, roi_polygon):

process_detection()

This removes background noise and improves accuracy.

Line Crossing Logic

Vehicles are counted only when crossing a virtual line:

if prev_y < line_y and curr_y >= line_y:

count += 1

This converts detection into events, not frame-based counts.

Direction Filtering

Direction is calculated using vector similarity:

cos_theta = dot(track_vector, direction_vector)

if cos_theta > threshold:

valid_direction = True

This ensures:

- Only valid traffic flow is counted

- Wrong-direction vehicles are ignored

Sample Output (Real Inference)

What’s Happening in This Frame

This output represents the full system working together:

- Bounding Boxes → Detection

- Tracking IDs (#50, #55, etc.) → Persistent tracking

- Class Labels → Vehicle types

- Live Counts (Top Left)

- Auto-Rickshaw: 4

- Car: 2

- Two-Wheeler: 9

- “OK” Label → Vehicle passed:

- ROI filter

- Direction check

- Line crossing condition

So this is not raw detection — it’s validated counting logic.

What This Output Proves

From real testing:

- Works in dense traffic

- Handles occlusion reasonably well

- No duplicate counting

- Stable tracking IDs

- Accurate counts based on movement

The biggest improvement came from:

Improving logic around the model — not just the model itself.

One Mistake That Changed the System

Initially, I used only detection + line crossing.

In dense traffic:

- Vehicles were counted multiple times

- Lane changes broke counting

The fix was introducing:

Tracking + Direction Filtering together

That made the system stable.

System Design (ML Pipeline)

The project follows a structured ML pipeline:

- Data Ingestion

- Data Validation

- Data Preprocessing

- Model Training

- Evaluation

- Deployment

Features:

- Stage-wise execution

- Reproducibility

- Easy retraining

Outputs

The system generates:

- Annotated video

- Vehicle counts

- CSV analytics

- Direction-filtered insights

Future Improvements

- Multi-camera tracking

- Speed estimation

- TensorRT optimization

- Real-time dashboard

- Cloud deployment

Conclusion

This project goes beyond object detection.

By combining:

- YOLO11 detection

- Persistent tracking

- Direction-aware filtering

the system becomes practical for:

- Traffic monitoring

- Smart city applications

- Urban analytics

Author

Devendrasingh Solanki

Machine Learning Developer