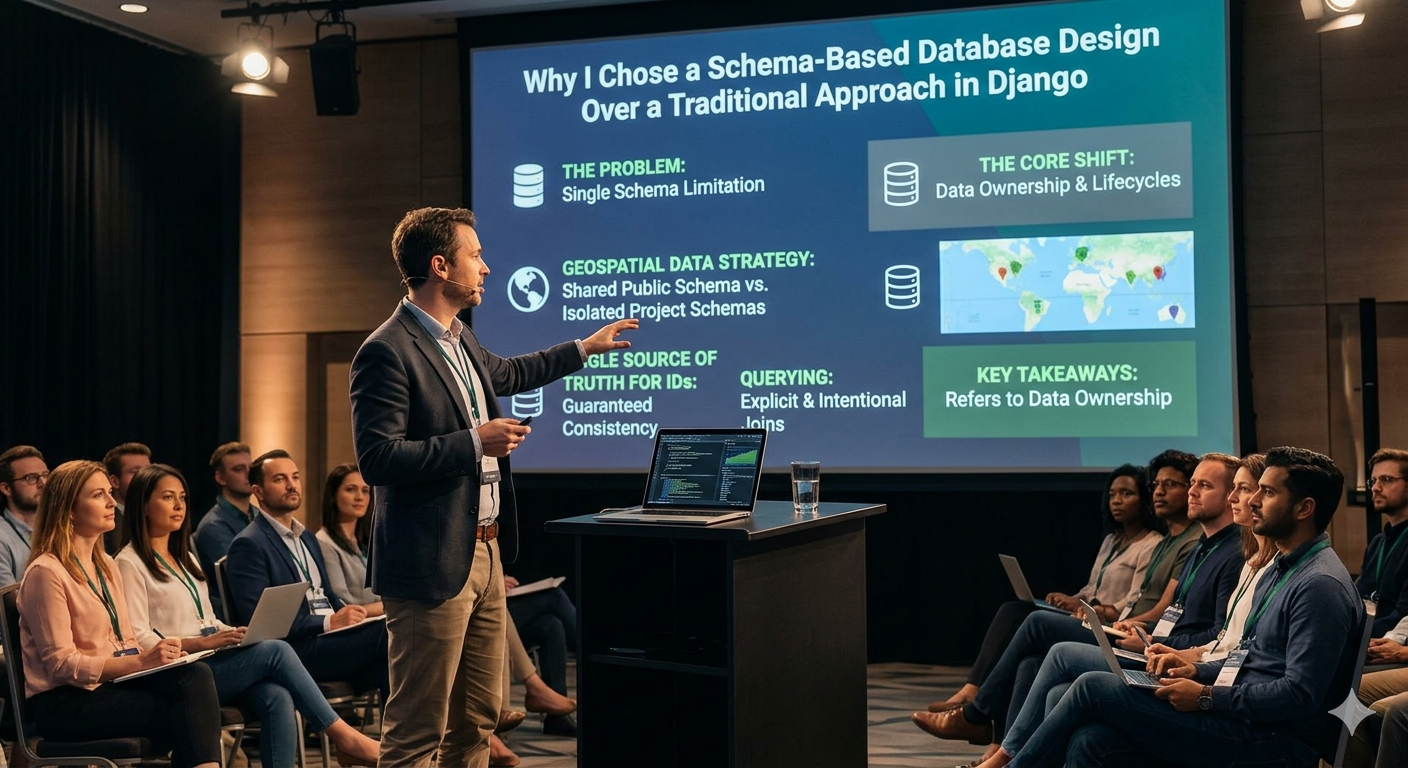

Why I Chose a Schema-Based Database Design Over a Traditional Approach in Django Introduction

Posted on April 30, 2026

4 min read

By Ankush Jadhav

The Problem Backend Developers Eventually Hit At the start, everything feels clean.

You create a Django project, define models, run migrations — all tables go into public, and life is simple.

But then the system grows.

You start dealing with:

- Large datasets

- Domain-heavy logic

- Reusable data across modules

- And in my case — geospatial data across multiple projects

And suddenly, the database becomes the bottleneck — not performance-wise, but design-wise.

Tables start piling up.

Relationships become harder to reason about.

And worst of all — you lose clarity about what data belongs where.

The Real Issue Was Not Django — It Was Data Organization

Django apps were modular. Code was clean.

But the database?

Everything lived in one place:

public.*

That meant:

- Shared data and project data were mixed

- Same type of data existed in multiple places

- Reusability became painful

- Any structural change felt risky

At some point, it became clear:

- The problem wasn’t models

- The problem was lack of separation at the database level

The Turning Point: Thinking in Terms of Data Ownership

Instead of asking:

“Which table should I create?”

I started asking:

“Who owns this data, and will it be reused?”

That one shift changed everything.

The Approach: Schema-Based Separation

I moved from a flat structure to a schema-based design in PostgreSQL.

Core idea:

publicschema → shared, reusable geospatial data- Project-specific schemas → dynamic, evolving data

The GIS-Specific Realization (This Changed Everything)

When working with geospatial systems, one thing becomes obvious:

Some data is universal.

- District boundaries

- Taluka boundaries

- Village geometries

This data:

- Does not change often

- Is used across multiple projects

- Should never be duplicated

So instead of copying it everywhere, I treated it as:

A central, authoritative dataset inside public

Project Data Lives Separately

Each project gets its own schema.

That schema contains:

- Project-specific features

- Asset layers (points, lines, polygons)

- Survey or transactional data

So instead of mixing everything:

public.boundaries ✅ shared

project_x.assets ✅ isolated

project_y.assets ✅ isolated

This ensures:

- No overlap

- No duplication

- No confusion

The Most Important Decision: Single Source of Truth for IDs

This is where things get really important.

Instead of redefining location data per project:

- I used IDs from the

publicschema as the only source of truth

That means:

- Every project references the same district/taluka/village IDs

- No duplicate identity systems

- No mismatched records

What This Solves

- Data consistency across schemas

- Reliable joins between datasets

- Easier aggregation and reporting

- Zero duplication of core entities

How Queries Became Better (and More Honest)

Once schemas were introduced, queries became explicit.

No more guessing.

SELECT b.geom, b.id, b.name

FROM project_schema.asset_data a

JOIN public.location_master b

ON a.location_id = b.id;

Now the query clearly tells:

- What is project data

- What is shared reference data

- How they are connected

This level of clarity is something you don’t get in a flat schema.

The Challenges (This Was Not Easy)

Let’s be real — this approach is not plug-and-play.

Django ORM Doesn’t Think in Schemas

Django assumes everything is in public.

So:

- You have to explicitly define schema in table names

- ORM abstraction becomes limited

- Sometimes raw SQL is unavoidable

Migrations Become Less Straightforward

- Schemas must exist before migrations

- Ordering matters

- Deployment needs extra care

This is where you stop relying blindly on Django and start understanding PostgreSQL properly.

Cross-Schema Joins Need Discipline

Just because you can join everything doesn’t mean you should.

If misused:

You recreate the same mess, just across schemas

So:

- Shared schema → read-only mindset

- Project schema → isolated logic

- Keep boundaries clean

Debugging Requires Awareness

Wrong schema = wrong data.

There’s no safety net here.

You need:

- Strong naming conventions

- Clear query understanding

- Awareness of data flow

What Changed After This

This wasn’t just a database change — it changed how I think about backend systems.

- I stopped thinking in tables → started thinking in data domains

- I stopped duplicating data → started enforcing single source of truth

- I stopped relying fully on ORM → started understanding SQL deeply

When This Approach Actually Makes Sense

Use this if:

- You are working with multiple projects sharing the same base data

- You deal with GIS or structured domain-heavy datasets

- You need clear separation of reusable vs project data

Avoid if:

- Your app is small

- No shared datasets exist

- You just need speed over structure

Final Thought

Most developers scale code first.

But real systems fail at the data layer, not the API layer.

Once you start thinking:

“This data belongs here, and only here”

Instead of:

“Let me just create another table”

That’s when your system starts becoming:

- Scalable

- Maintainable

- And actually understandable